"Things are not going to change until change happens in the U.S."

Share this Post

Disinfo Talks is an interview series with experts that tackle the challenge of disinformation through different prisms. Our talks showcase different perspectives on the various aspects of disinformation and the approaches to counter it. In this installment we talk with Danny Rogers, Co-Founder and Executive Director of the Global Disinformation Index.

Tell us a bit about yourself, your background and how you got involved in disinformation?

I used to work for the Applied Physics Lab (a national laboratory affiliated with Johns Hopkins University in the US), where I led technology development projects for cyber and information operations work. That experience cut my teeth on cyber and information warfare. Then, about 2-3 years ago, after the 2016 US presidential elections, I started asking myself just like many other folks: “What happened? What can I do to help?” As an American and an intelligence professional, I felt offended looking at the kinds of maneuvers that were levied against the United States in that election – so crude, and yet so effective. Since I was no longer in the government sector, I thought I might do something about it by focusing on the commercial side of the disinformation problem. It was apparent to me that the problem is, in part, a product of the toxic economy: When you commoditize and monetize attention at all costs, that is what you get, namely, a collection of business models that create this destructive externality. Having a foot in cybersecurity and another in the commercial world, I teamed up with a group in London and together we created what has now become the Global Disinformation Index (GDI). The idea was to create a catalyst to change and disincentivize disinformation activity, in order to provide the industry with definitions and data. The GDI, an independent, neutral, transparent organization does just that, by helping to define the problem and build datasets that the industry needs in order to determine what is and isn’t disinformation.

How big of a problem is disinformation nowadays? We’ve come a long way since 2016. Where are we at right now? Where are we heading? How worried we should be?

Initially we focused on what we call “ad-supported disinformation.” Since then, we’ve been making a lot of additional headway. The original example from 2016 was ABC news.com.co, which was literally a fake ABC News website in the US. Similar fake sites existed, and continue to this day to capture and monetize audiences, most commonly via programmatic advertising, but also via merchandising and direct donations. We’ve made serious progress in the last 18 months in reducing the number of ads auctions on such sites and cutting off revenue streams. Some of them are the most trafficked sites on the web, but in terms of the total amount of sites, there are not that many – which is good news. The bad news is that it doesn’t take much to seed broader movements on the open web. You can see this with the halting progress in vaccinating the world’s population against COVID. The challenge we face in the US is not vaccine availability, but purely the infodemic. People have been convinced through the online disinformation ecosystem not to get vaccinated, becoming eventually sick and causing more harm. In that sense, while the number of websites acting as purveyors of disinformation isn’t that big, it’s an enormous problem in terms of impact, to the point that it poses a threat to democracy. Around the world, authoritarian regimes are increasingly coming into power, which I see as a direct result of the collective information environment poisoned by these toxic business models. The volume is not that big from a content perspective, but the impact cannot be overstated; the risk of harm to individuals, to society, to me. This is a true cultural malignancy and that’s why it’s so important that we all work to combat it.

Who are the actors spreading disinformation and what is their motivation?

We like to think of the threat-actor spectrum on a two-dimensional plane. On one axis, you have the spectrum of motivations, from ideological to geopolitical, including actors motivated purely by profit. For example, my friend, Cameron Hickey reported for PBS on Cyrus Massoumi, who ran a couple of websites (such as patriotviral.com) around the 2016 election, and was pulling in millions of dollars a year, monetizing those sites through advertising and merchandise. In this world, where certain ideologies align heavily with financial incentives, it’s hard to separate the two motivations. On the other axis you have highly centralized actors, ranging from the Internet Research Agency to Cambridge Analytica, influence operators (state-affiliated or for hire), and all the way to the true grassroots trolls. Within this ecosystem there are interactions: The professional influence operators are watching what the grassroots community is doing and using that as a testing and seeding ground for narratives that stick. The highly organized actors will pick up on narratives that seem promising and will put their professional resources behind them. The 5G conspiracy theory is a great example of that. It started with a few YouTube videos and blogs that no one knew about, but as it started gaining traction, the state-controlled Russian network RT did a six-part documentary series on it and now it is in the public consciousness. We use the analogy of brush-fire arson: A few small campfires suddenly erupt with a “whoosh” and converge, causing a huge forest fire. Likewise, all these different actors working for their own interests come together in this two-dimensional spectrum, resulting an emergent phenomenon of highly developed conspiracy theories.

How can you tackle this in an effective, large-scale way, given that you’re dealing with different actors and different motivations?

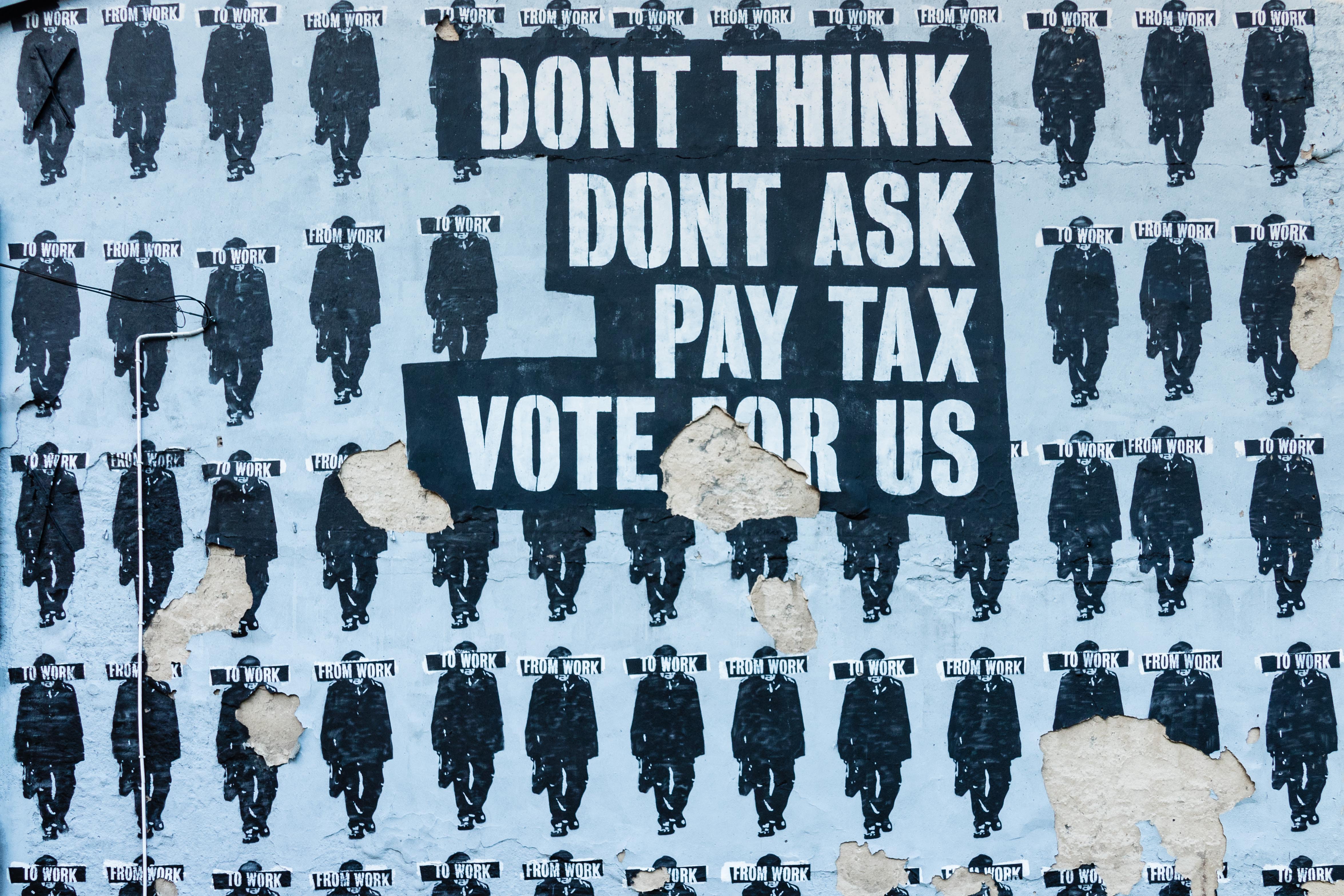

It’s going to take everyone working together. We carved out a piece for ourselves specifically around defunding and providing data to some parts of the platform community. In my mind, it will take society investing in three categories of areas to combat this problem. The first category is obviously the long-term investment in media literacy, which is why there’s a lot of talk about the younger generation. The younger generation understands that it doesn’t take much to put something online; they have a more natural skepticism. Investing in media literacy is important, but it is a long-term investment and doesn’t help us with the midterm elections coming up soon. The second category is platform reform, such as the broader legislative and regulatory efforts (the DSA and DMA in the EU), and the online safety bill in the UK. In the US, three areas are going to act as big regulatory levers that will go a long way towards stemming the flow of disinformation. One is antitrust enforcement, the area where we’re seeing the most active regulatory machinery working right now. The second is privacy regulation, which I think will help counterbalance those algorithmic machines that run on personal data, especially in the US. The third is Section 230 of the Communications Decency Act of 1996, which has been getting more attention in Congress since the Facebook war. These three areas are part of platform reform overall, in addition to all the work we’re doing, and the work that trust and safety teams do. The third category is counter-messaging, what I like to call the “ground war” of disinformation. While we work on long-term investments (like media literacy) and medium-term goals (platform reforms and regulations), go out there and counter- message by buying up the space and paying qualified people to fill it up! People’s attention is for sale. It’s not about arguing with the people who are peddling disinformation; it’s not about getting into “that’s not true” and debunking or fact-checking, but about effective counter-messaging. It is an art! It must be done in a way that doesn’t inadvertently amplify the misinformation or further entrench people’s positions. These are the three areas that really help to win the day. Once they become mature and robust, we’ll see our whole world in a much better place.

Has the pandemic changed anything in terms of awareness of the problem and the motivation to tackle it?

The way the pandemic changed things was to tie all disinformation narratives to the pandemic. Consider the anti-vaxxers’ preoccupation with the measles vaccine. Now the 5G conspiracy theory world has shifted to COVID, and is saying that COVID is the result of 5G. Everyone’s tying their narrative to the pandemic, because that is what is capturing most people’s attention. The fact that we see platforms acting is because the pandemic has put the harms of disinformation front and center. Pre-pandemic, we would talk about people lying on the Internet, but there was this sense that what happened online was “online” and not in the “real world.” I think this distinction is misleading because if there’s anything that the pandemic has taught us, it’s that there is no distinction between online and the real world. A study made by a Swiss economist demonstrates a strong, robust statistical correlation between two Fox News shows (one taking the pandemic seriously, the other not) and the different county pandemic health outcomes (death and infection rates). People who watched the show and as a result, did not take the pandemic seriously, got sick and died more than people watching the other show. Our information environment affects us as human beings. This has become apparent, and that’s why platforms have suddenly started to act. Before, platforms pretended to be neutral, but they are not. Their algorithms make choices every millisecond about which items to put in front of people from the infinite sea of content to keep their attention. As whistleblowers have confirmed, such decisions are algorithmically made to favor certain content, which is why platforms, suddenly, at the beginning of the pandemic, started amplifying authoritative information from the CDC and the World Health Organization, leading to positive outcomes. All of that has been the net effect of the pandemic. We can no longer claim that platforms are powerless.

What is preventing reforms from happening tomorrow?

Filibuster. I think the filibuster is one thing that’s preventing it in the US. Another thing, and this is not my original thought, is that the tech industry has for the past 20-25 years enjoyed its status as the flagship industry in the United States. Cities are falling over themselves to become the next Silicon Valley, we are so enamored of tech. That’s slowly changing, but in the United States, without tech, what’s our flagship industry? It has blinded us to seeing the toxic externalities that the whole tech industry has created. There’s going to be a slow shift, but it will take a while, and these companies have a huge amount of political power. Facebook is the largest spender of lobbying dollars in Washington right now. As these business models change, all of us will be affected.

Could we see actions taken in the EU taking a global leadership role in that regard?

Europe will have a significant effect for two reasons. One, is that Europe recognizes the toxic effects, because it has experienced them. Europe has seen the results of mass data collection, thus there is a natural distrust. Second, the companies that are driving this global change are not European companies. That’s why Europe is more willing to act. That is also why their actions will have a serious impact in driving the conversation; but they aren’t going to be the bulk of the conversation. Things are not going to change until change happens in the US, because, ultimately, these companies are American. Europe is certainly providing templates for what to do and helping to drive the conversation towards a safer, more productive place, but until Washington follows suit, I don’t think it’s going to have the effect necessary to bring about the large-scale change needed to make a difference.

Danny Rogers is Co-Founder and Executive Director of the Global Disinformation Index.

This Interview is published as part of the Media and Democracy in the Digital Age platform, a collaboration between the Israel Public Policy Institute (IPPI) and the Heinrich Böll Foundation.

The opinions expressed in this text are solely that of the author/s and/or interviewee/s and do not necessarily reflect the views of the Heinrich Böll Foundation and/or of the Israel Public Policy Institute (IPPI).

Share this Post

Stuck in the Middle: 5G networks in Germany and Israel in times of Sino-American competition

Authors: Tim Stuchtey and Amit Sheniak We are currently witnessing the rollout of the fifth generation of mobile telecommunication networks…

No Euro-Vision: The European Union’s Coverage in Israeli Media

The previous European legislative period witnessed some of the most challenging crises of the European Union (EU) such…

Updating Democracy for Future Generations: Adding a Fourth Branch to the Separation of Powers Model

Over the past two decades, political science has engaged in a lively debate about the “presentism” of democracies,…